Servo Magazine ( 2020 Issue-1 )

bots IN BRIEF (01.2020)

Armed and Dangerous?

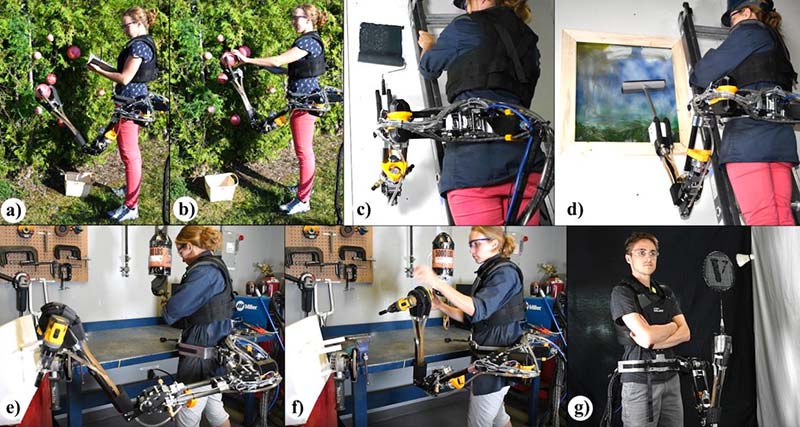

Researchers from Université de Sherbrooke in Canada have designed a waist-mounted, remote controlled hydraulic arm that can help you with all kinds of tasks. Oh, and you can smash through walls with it too.

This type of wearable robotic arm is known as “supernumerary.” The system created by the Canadian researchers (in partnership with Exonetik) has three degrees of freedom and is actuated by magnetorheological clutches and hydrostatic transmissions with the goal of “mimicking the performance of a human arm in a multitude of industrial and domestic applications.” (Like wall punching.)

Researchers at the Université de Sherbrooke in Canada developed a waist-mounted hydraulic arm that can help you with all kinds of tasks.

The hydraulic system provides comparatively high power, but the power system itself is connected to the user through a tether, minimizing how much mass the user has to actually wear (and keeping the inertia of the arm low) while also limiting mobility somewhat.

Off-board power does put a bit of a dampener on the superhero potential, but in practical terms, users aren’t likely to be moving around all that much. If they are, mobile options could include being tethered to an autonomous vehicle that follows you around or perhaps a more portable backpack power unit.

The robotic arm itself weighs just over four kilograms — about the same as a real human arm. It can lift 5 kg and has a maximum end effector speed of 3.4 meters per second, with a workspace that’s restricted to keep it from smashing you into a wall.

The researchers envision a number of applications for their supernumerary arm, including: vegetable picking (a, b); painting a wall (c); washing a window (d); handing tools to a worker (e, f); and playing badminton (g). Photos courtesy of Université de Sherbrooke.

At the moment, there isn’t much in the way of autonomy since the arm is being controlled by a second human via a miniature handheld arm in a master-slave configuration. The researchers suggest that adding some sensors could allow the arm to do things like pick vegetables next to the user, as well as do more collaborative tasks like providing tool assistance.

You can think of it as being able to act as a co-worker, either directly increasing productivity by performing the same task as the user in parallel, or doing some different tasks in order to free up the user to do stuff that requires creativity or judgement.

HAMR-Jr Time

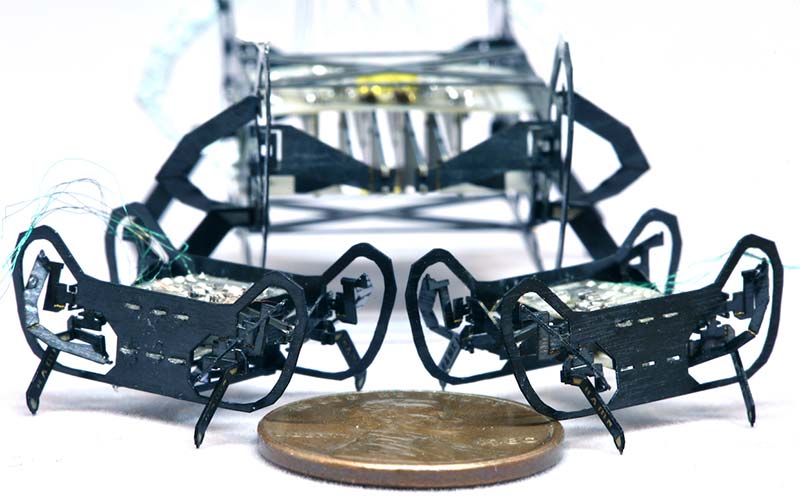

The Harvard Ambulatory MicroRobot (HAMR) was a bit chunky back in 2018, measuring about five centimeters long and weighing around three grams. However, a new version of HAMR has been introduced. Called HAMR-Jr, it’s significantly smaller at just a tenth of the weight and it comes up to about knee-high on a cockroach.

About the size of a penny, HAMR-Jr is one of the smallest and fastest insect-scale robots, capable of running at nearly 14 body lengths (30 centimeters) per second. Photo courtesy of Kaushik Jayaram/University of Colorado Boulder/Harvard SEAS.

HAMR-Jr may be tiny, but it’s no slouch. Piezoelectric actuators can drive it at nearly 14 body lengths (30 cm) per second, at a gait frequency of 200 Hz. The actuators can be cranked up even more approaching 300 Hz, but the robot actually slows down past 200 Hz because it turns out that 200 Hz hits a sort of resonant sweet spot that gives the robot as much leg lift and stride length as possible.

Slow as a SlothBot

We tend to focus on motion a lot with robots, and the most dynamic robots get the most attention. This isn’t to say that highly dynamic robots don’t deserve our attention, but there are other robotic philosophies that, while perhaps less visually exciting, are equally valuable under the right circumstances.

Magnus Egerstedt, a robotics professor at Georgia Tech, was inspired by some sloths he met in Costa Rica to explore the idea of “slowness as a design paradigm” through an arboreal robot called SlothBot.

Georgia Tech roboticists are exploring the idea of “slowness as a design paradigm” through an arboreal robot called SlothBot. Photo courtesy of Georgia Tech.

Since the robot moves so slowly, why use a robot at all you ask. It may be very energy-efficient, but it’s definitely not more energy efficient than a static sensing system that’s just bolted to a tree for example. The robot moves but it’s also going to be much more expensive (and likely much less reliable) than a handful of static sensors that could cover a similar area. The problem with static sensors, though, is that they’re constrained by power availability, and in environments like under a dense tree canopy, you’re not going to be able to augment their lifetime with solar panels.

If your goal is a long duration study of a small area (over weeks or months or more), SlothBot is uniquely useful in this context because it can crawl out from beneath a tree to find some sun to recharge itself, then crawl right back again to resume collecting data.

Healthcare Gone to the Dogs

Some coronavirus patients at Brigham and Women’s Hospital in Boston are being greeted by a new kind of nurse: a four-legged robot named “Spot.”

Boston Dynamics’ famed dog-like robot has been working with patients at the hospital to provide a buffer between potentially contagious cases and swamped health care officials.

“It’s kind of fun working with it,” Dr. Peter Chai recently told Digital Trends. “[Spot] is not that hard to control, and it gets us to where we need to be without being exposed.”

The robot — which is best known for a series of viral videos showing its ability to walk, jump, and even dance on four legs — has been lending a helping hand (leg?), according to authorities at the hospital and Boston Dynamics.

“Our hope is that these tools can enable developers and roboticists to rapidly deploy robots in order to reduce risks to medical staff,” Boston Dynamics wrote in a recent blog post announcing the collaboration.

Chai said Spot is being used in the hospital’s outdoor triage tent for patients who have upper respiratory symptoms but are not sick enough to stay in the hospital. Spot greets patients with an iPad-like device that allows them to see and talk to a physician virtually.

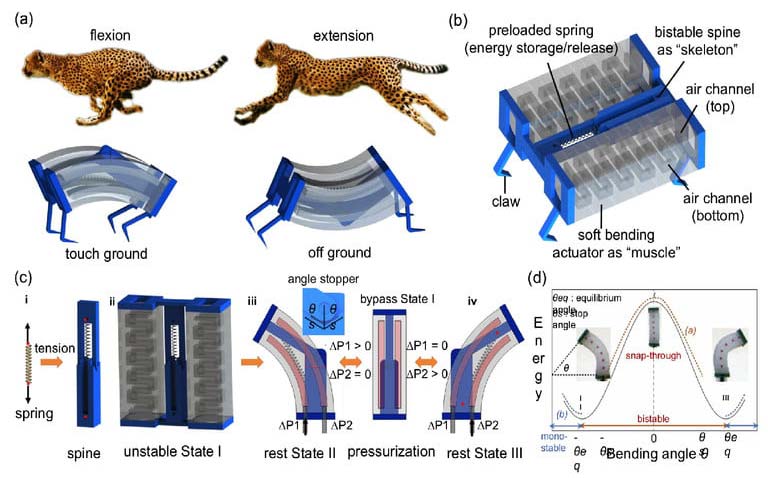

Cheetahs Never Prosper

The North Carolina State cheetah robot — called LEAP, or Leveraging Elastic instabilities for Amplified Performance — is a soft robot that can significantly outpace other soft robots by borrowing inspiration from the ways real cheetahs flex their spines to achieve speed and power.

Photo courtesy of Jie Yan/North Carolina State University.

By making the soft robot’s flexible spine able to quickly flex and extend to mimic the active role of a cheetah’s spine, it’s possible to quickly propel the soft robot forward on the ground (and even underwater).

Bot or Not?

For the past several years, there’s been heightened concern about the impact of so-called bots on platforms like Twitter. A bot in this context is a fake account synonymous with helping to spread fake news or misinformation online.

So, how exactly do you tell the difference between an actual human user and a bot? While clues such as the use of the basic default “egg” avatar by Twitter, a username consisting of long strings of numbers, and a penchant for tweeting about certain topics might provide a few pointers. However, that’s hardly conclusive evidence.

That’s the challenge a recent project from a pair of researchers at the University of Southern California and University of London set out to solve. They have created an AI that’s designed to sort fake Twitter accounts from the real ones based on their patterns of online behavior.

“Detecting bots can be very challenging as they continuously evolve and become more sophisticated,” Emilio Ferrara, research assistant professor in the USC Department of Computer Science, told Digital Trends recently. “Existing tools that work well with older and simpler types of bots are not as accurate to predict more complex ones. So, it’s always exciting to identify new, previously unknown characteristics of the behavior of human users that are not yet exhibited by bots. This could [be used to help] improve the accuracy of detection tools.”

The researchers leveraged various datasets of hand-labeled examples of both fake and real Twitter account messages produced by other researchers in the community. In total, they trained their system on 8.4 million tweets from 3,500 human accounts and an additional 3.4 million tweets from 5,000 bots. This helped them to uncover a variety of insights into tweeting patterns.

For instance, human users are up to five times more likely to reply to messages. They also get increasingly interactive with other users over the course of a long Twitter session, while the length of an average tweet decreases during this same time frame. Bots, on the other hand, show no such changes.

Do the Worm

General Electric is getting into giant robot earthworms.While this might sound unlikely, GE’s research division has landed a big $2.5 million award from the Defense Advanced Research Projects Agency (DARPA) to ensure the project continues crawling along.

“What makes this so unique is that we’re really drawing inspiration from two sources in nature: the earthworm and tree roots,” Deepak Trivedi, who is leading this project for GE Research, recently told Digital Trends. “From the earthworm, we’re mimicking its fast rhythmic movements to rapidly and efficiently form the tunnels we’re trying to form. And from the tree roots, we’re mimicking [their] scale and ability to create large force by studying how roots grow into the ground. It’s the combination of these two forces of nature that makes our project — and robot — so unique.”

The soft robot tunneler is made up of large segmented pieces which act like the fluid-filled “hydrostatic skeleton” found in invertebrates. The robot’s artificial muscles move like a real earthworm’s in order to propel it forward, while the segmented design also gives it impressive freedom of movement and the ability to maneuver into difficult-to-reach places.

Draganfly Drones Do Monitoring

In a rush to combat the global spread of the deadly coronavirus (COVID-19), Draganfly will deploy “pandemic drones” to remotely monitor and detect people with infectious and respiratory conditions to help stop the spread of the disease.

The Draganfly drones will be fitted with a specialized sensor and computer vision system that can monitor temperature, heart and respiratory rates, as well as detect people sneezing and coughing in crowds and other places where groups of people may work or congregate.

The Draganflyer Commander UAV is a remotely operated miniature helicopter designed to carry wireless camera systems. Photo courtesy of Draganfly.

Draganfly will serve as the global systems integrator for the Vital Intelligence Project: a health and respiratory monitoring platform from Vital Intelligence, Inc. The breakthrough technology was developed in a collaboration between the University of South Australia and the Science and Technology Group (DST), which is part of Australia’s Defence Department. The project has an initial budget of $1.5 million.

The sensing system uses existing and new camera networks, UAVs, and remotely piloted aircraft systems for health monitoring and detection of infectious and respiratory conditions, including monitoring temperatures, heart rates, and respiratory rates.

The drones can monitor people in public crowds, workforces, airlines, cruise ships, convention centers, border crossings, or critical infrastructure facilities. The technology can also be used to monitor potential at-risk groups, such as seniors in care facilities.

Follow the Bouncing Ball

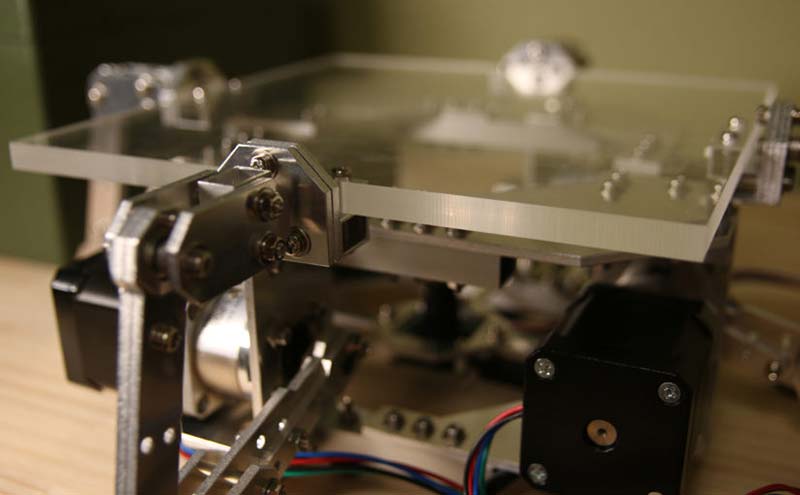

T-Kuhn over at Electron Dust started thinking about ball juggling machines in 2015. He wrote about his first attempts at creating them in the Electron Dust blog post in 2017. He then wrote another post about his then newest build in 2018. Now, in 2020, the quest to get a machine to juggle a ping pong ball reliably has come to an end (as this current build is able to keep the ball bouncing for hours.)

The machine requires the following components to work:

- 1x Teensy 4.0 microcontroller running the code at https://github.com/T-Kuhn/HighPrecisionStepperJuggler/tree/master/Arduino/HighPrecisionStepperJuggler

- 4x StepperOnline DM442S stepper motor drivers

- 4x Nema 17 stepper motors with 5:1 planetary gearbox

- 1x 48V 8A power supply

- 1x e-con Systems See3CAM_CU135 camera

- 1x Windows computer with OpenCV installed on it

- All the parts defined in the Fusion360 project described at https://github.com/T-Kuhn/HighPrecisionStepperJuggler/tree/master/Autodesk%20Fusion360%20data

- The custom Windows Application (made with Unity) described at https://github.com/T-Kuhn/HighPrecisionStepperJuggler/tree/master/Unity/HighPrecisionStepperJuggler/Build

You can watch it bounce here: https://electrondust.com/2020/03/01/the-octo-bouncer.

Gonna Burst Your Bubble

While folks around the world are working on different artificial pollination systems, there’s really no replacing the productivity, efficiency, and genius of bees. However, researchers at the Japan Advanced Institute of Science and Technology (JAIST) have come up with an alternate method of pollination: pollen-infused soap bubbles blown out of a bubble maker mounted to a drone. And it apparently works really well.

Researchers in Japan developed a drone equipped with a bubble maker for autonomous pollination.

Most other examples of robotic pollination have involved direct contact between a pollen-carrying robot and a flower. This works, but is not really efficient since it requires the robot to do what bees do: identify and localize individual flowers and then interact with them one at a time for reliable pollination. The problem becomes scaling to cover an entire orchard.

In a recent issue of iScience, JAIST researcher Eijiro Miyako described how his team had been working on a small pollinating drone that had the unfortunate side-effect of frequently destroying the flowers that it came in contact with. Frustrated, Miyako needed to find a better pollination technique. While blowing bubbles at a park with his son, Miyako realized that if those bubbles could carry pollen grains, they’d make an ideal delivery system.

To show that their bubble pollination approach is scalable, the researchers equipped a drone with a bubble machine capable of generating 5,000 bubbles per minute. In one experiment, the method resulted in an overall success rate of 90 percent when the drone moved over flowers at 2 m/s at a height of 2 m. Photos courtesy of iScience.

You can create and transport bubbles very efficiently, generate them easily, and they literally disappear after delivering their payload. They’re not targetable, of course, but it’s not like they need to chase anything, and there’s absolutely no reason to not compensate for low accuracy with high volume.

According to Miyako:

We accidentally found that natural pollen grains can be easily incorporated into a soap film and flown in the air using various bubble devices.

Article Comments