Pokémon GO — The Killer App for Augmented Reality?

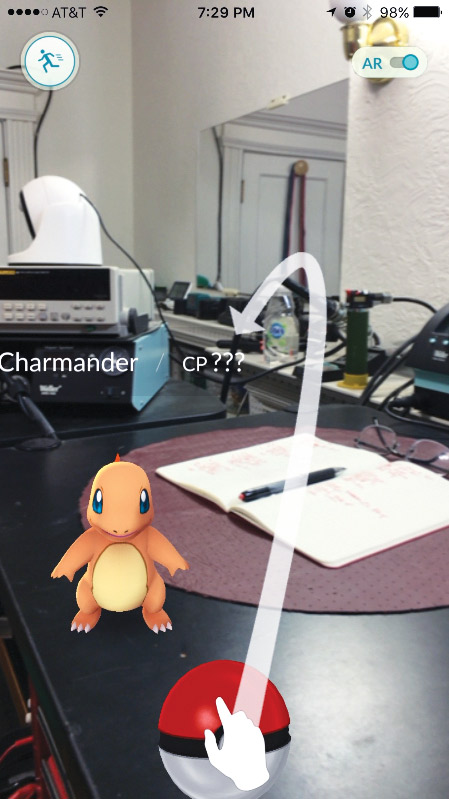

Well, it’s finally here — the killer app for augmented reality. I’ve worked on and written about augmented reality (AR) applications for years, but the technology of mixing synthetic images, sounds, and other media with reality has never expanded past the early adopter crowd. At least until now. For the past few weeks, my workbench has been invaded by creatures from the Pokémon GO game, as you can see in the accompanying photo.

Unless you’ve been living in a cave without Wi-Fi, you’ve no doubt seen users of Pokémon GO in action — all part of a world-wide Easter egg hunt. The mobile game — available for free download on the major platforms — features virtual creature overlays that are seamless and real enough to be captivating. As with early texting adopters, there are the usual reports of people walking into walls, ongoing traffic, and the like — all self-limiting behaviors. Pokémon GO may be a bright light that burns quickly, but it has brought AR into the mainstream consciousness. All of this attention isn’t hurting the Nintendo brand, either.

Prior to its debut as the technology behind Pokémon GO, AR was known primarily for use in advanced heads-up displays in military aircraft, in training, and actual tools for surgeons and medics (think MRI overlaid on a patient in surgery, as seen through AR goggles), and, of course, as the intricate overlays of real and synthetic characters and scenery in modern movies. Purists might argue that only real time overlays constitute AR, but the overall effect is the same — a blurring of the real and the synthetic.

My work with AR has focused on training applications for the military, such as AR radiation findings on real patients. For example, medics use a real Geiger counter on real patients, and the counters register radiation counts to mimic various scenarios. The AR technology enables medics to experience identifying and decontaminating real patients without any exposure to real radiation. The radiation is simply an AR overlay.

The great thing about killer apps is that they usher in more developers, more investors, and more consumer demand. Assuming the economy cooperates, I expect follow-on AR technology that expands far beyond mobile gaming. Think about it. Why bother putting a soft skin over a robot arm when you can create virtual skin with an AR routine? Why bother painting your bedroom walls when you can overlay a virtual wall-high video display onto them? Perhaps game AR will resurrect the concept of Google Glasses, enabling everyone to one day live in an AR world. Does that sound like fun — or frightening? Let me know. For now, enjoy the game. It’s captivating, even for a non-gamer. SV

Posted by Larry Lemieux on 09/19 at 02:59 PM

Permalink